Product adoption

29% of enterprise teams on Lokalise adopted the feature, with 19.1% monthly retention.

Overview

Redesigned Lokalise’s translation review flow from spreadsheet-heavy manual work into an AI-first system for faster, more consistent QA.

My role

Lead Product Designer

Team

Timeline

2023 Q2 - Q3

Impact Overview

Product adoption

29% of enterprise teams on Lokalise adopted the feature, with 19.1% monthly retention.

Lead generation

The feature attracted 804 MQLs to try it.

Industry recognition

Recognized as the industry's first AI-driven translation review tool.

Imagine visiting a website fully translated into your language, only to find that the translation is poorly executed. How would you feel as a user? Or maybe a simpler example: Would you hire me if I misspell your company name in my cover letter?

That's why many of Lokalise's customers implement a translation review or QA stage in their localization process to enhance the quality of translations.

Conversion impact

60%

drop in conversion rates from poorly translated content

Weekly time cost

39.5h

average time spent reviewing translations per week

However, the review process itself is not straightforward. For every issue identified, proofreaders must leave comments, log the error type, and provide corrections — which is very time-consuming.

Everyone hates spreadsheets

Customers are asking reviewers to fill in spreadsheets to log translation issues, but it's clearly ineffective and hard to organize.

Impossible to review all translations

For larger customers, reviewing over 10,000 translations weekly is unmanageable, even though they want to.

Different reviewers, different standards

Different reviewers have different standards, creating many false-positive issues and making it tough to measure quality accurately.

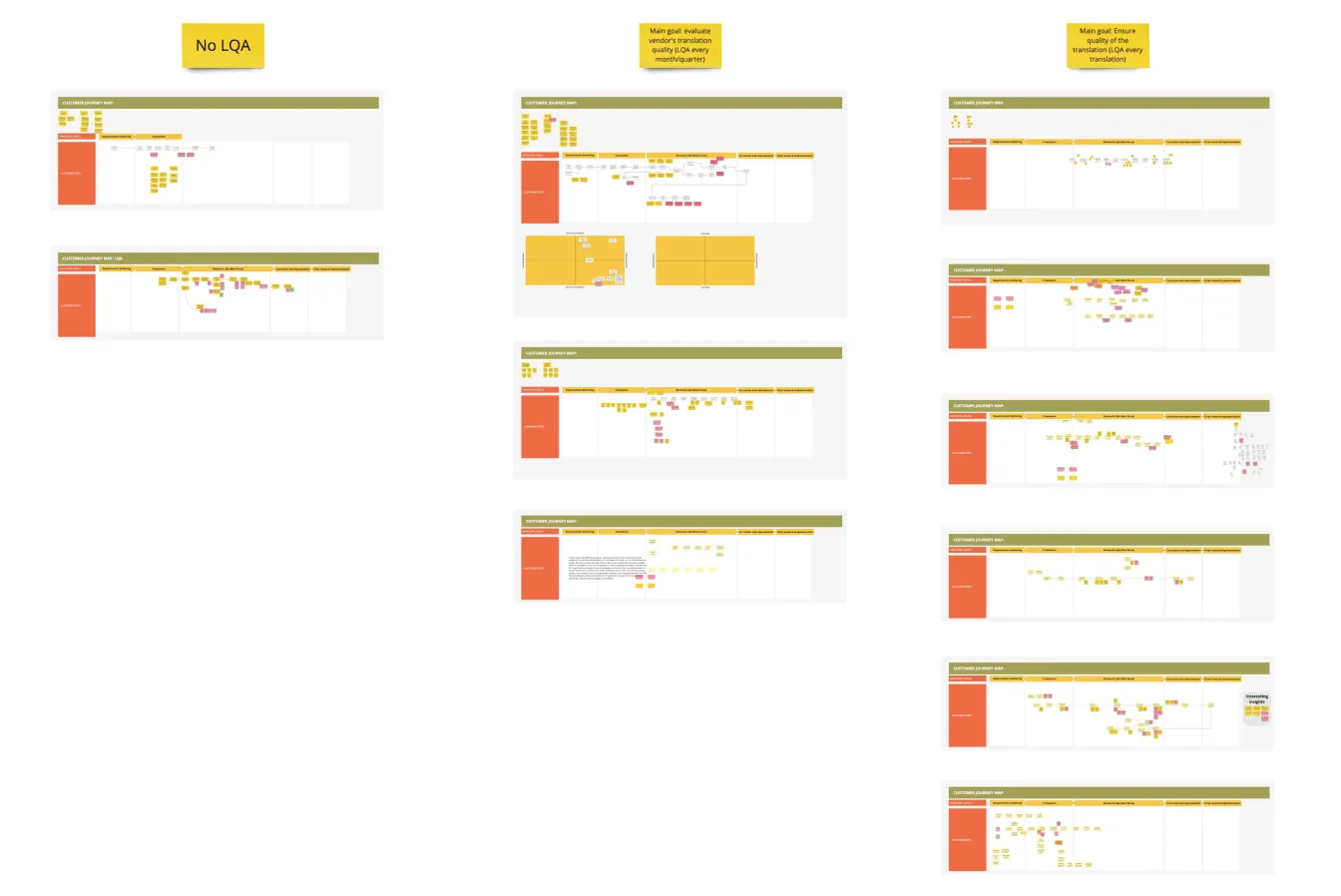

To better understand the nuances of customer review translations, we interviewed 11 customers and identified 3 recurring issues.

Everyone hates spreadsheets

Customers are asking reviewers to fill in spreadsheets to log translation issues, but it's clearly ineffective and hard to organize.

Impossible to review all translations

For larger customers, reviewing over 10,000 translations weekly is unmanageable, even though they want to.

Different reviewers, different standards

Different reviewers have different standards, creating many false-positive issues and making it tough to measure quality accurately.

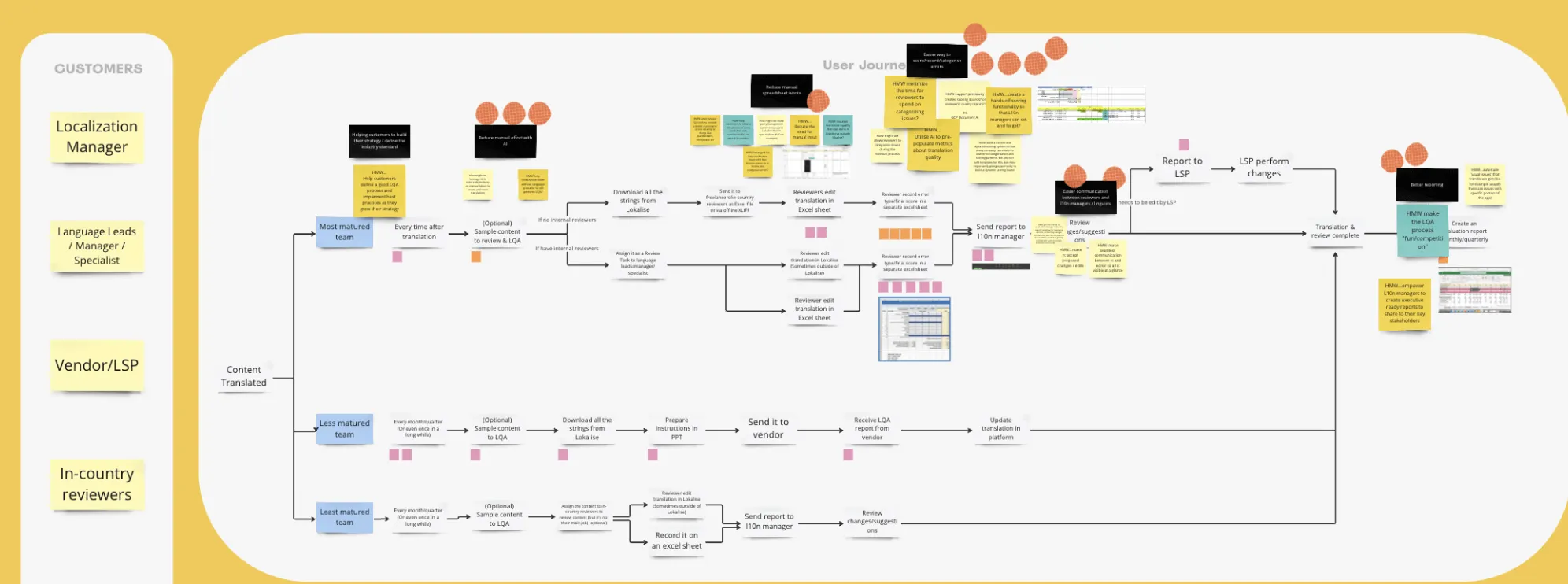

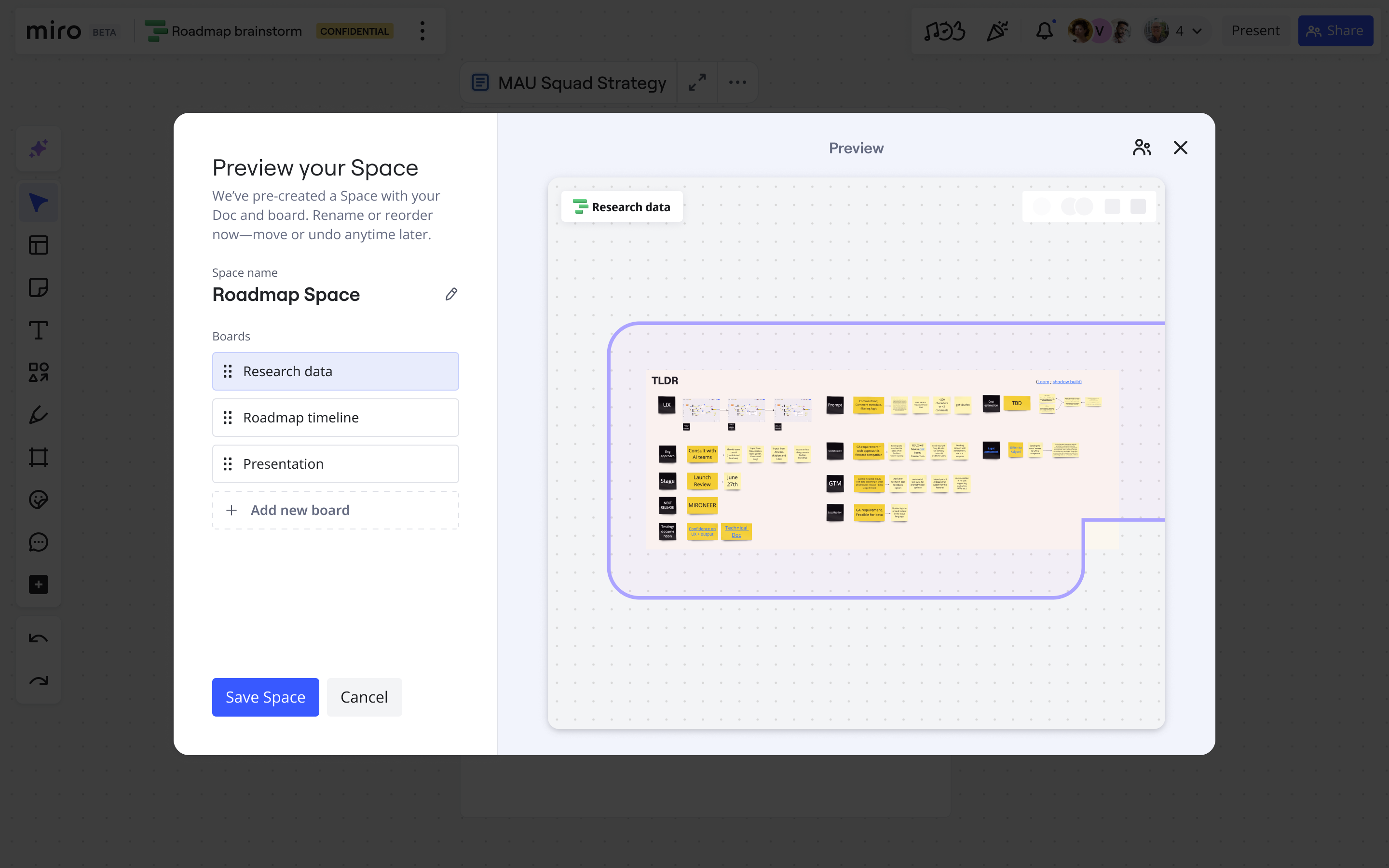

1. Internal Knowledge Gathering

I initiated the process by setting up a Miro board to map out the user journey. I invited input from Go-to-Market team members to ensure a wide range of perspectives. This collaborative approach helped us identify critical focus areas and integrate diverse insights, aligning our team from the start.

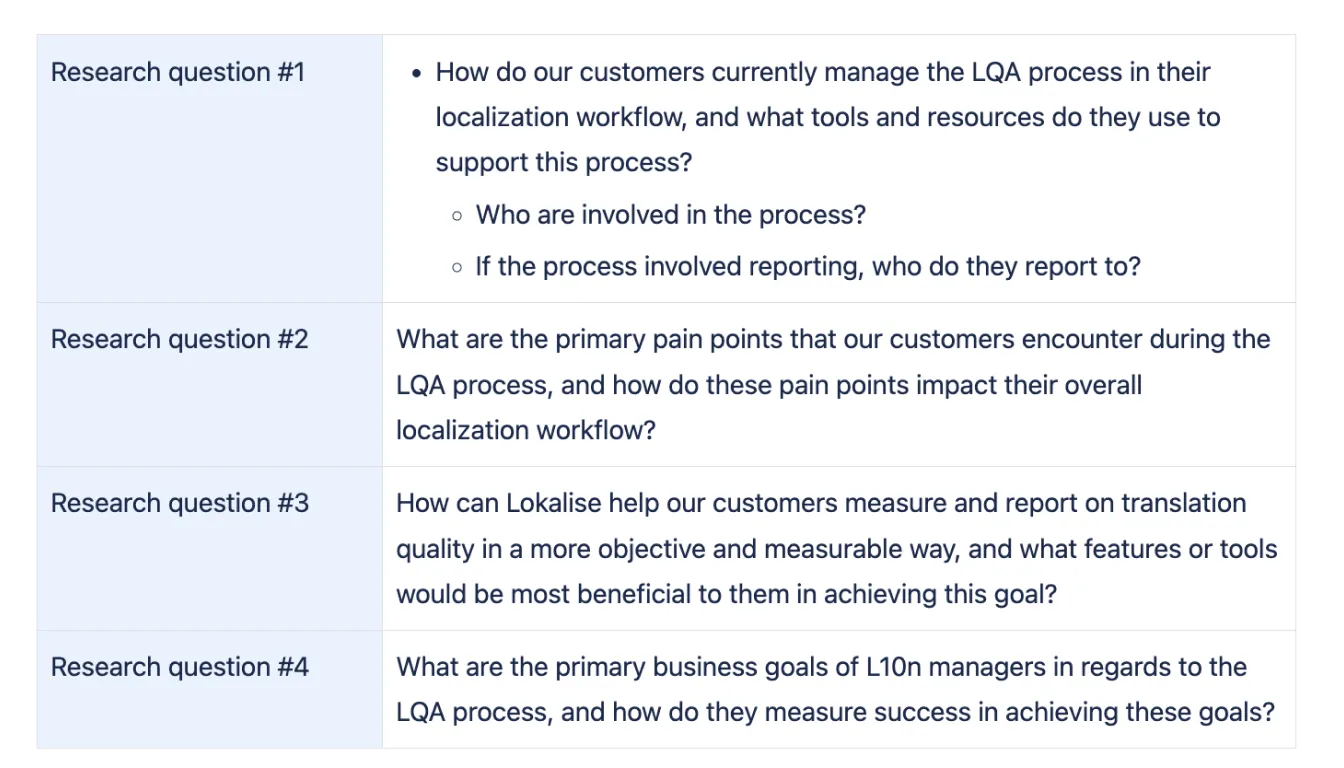

2. Identifying the gaps and planning research

In collaboration with the Product Manager and User Researcher, we carefully crafted the research questions and developed interview scripts. This thorough preparation ensured that we could effectively gather deep insights during our interactions with users.

3. Collaborative Research

We engaged directly with our customers to map out their user journeys and identify their primary challenges. This collaborative research was instrumental in providing us with a detailed understanding of user issues, guiding our subsequent steps.

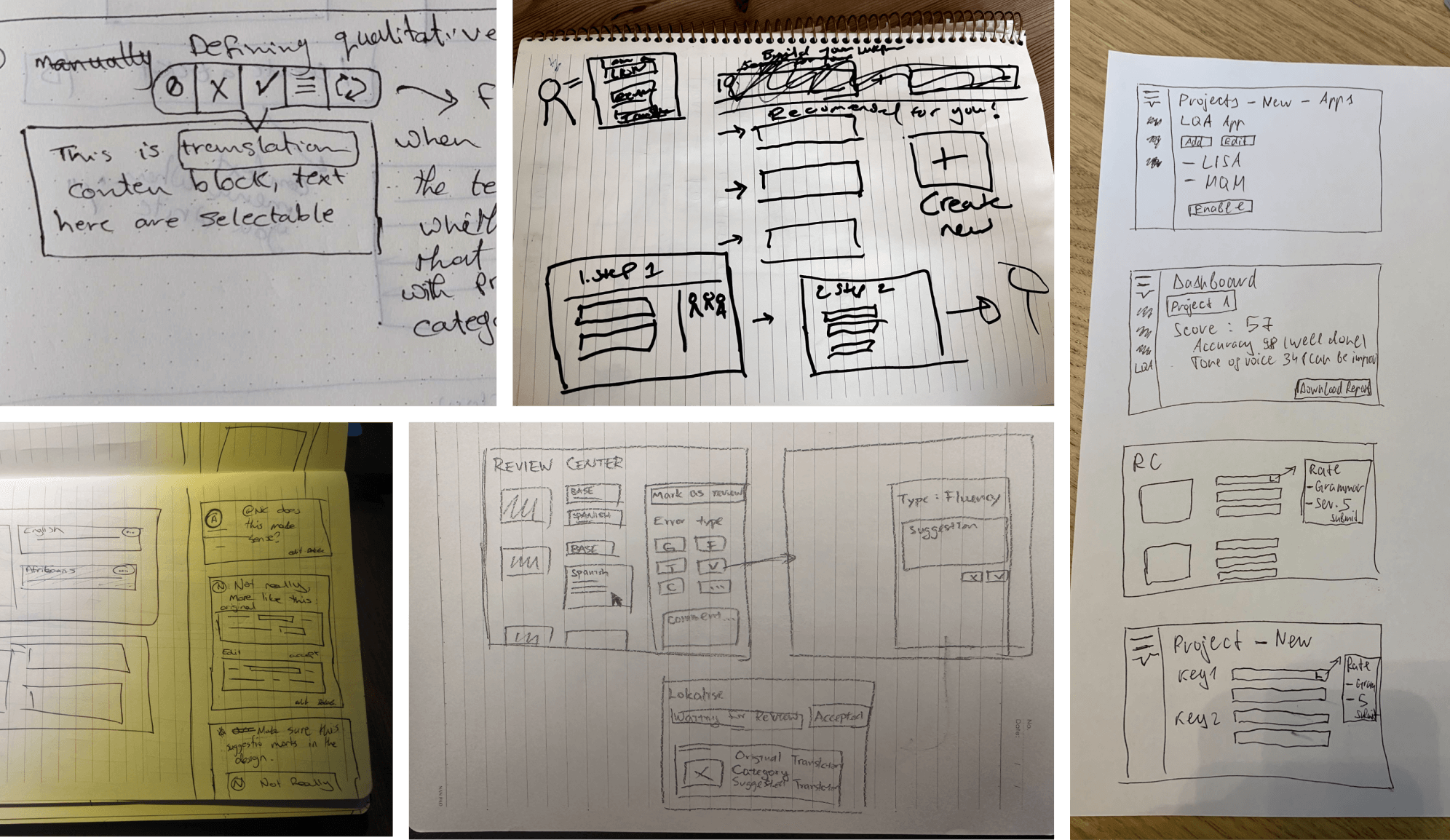

After conducting the research, I facilitated a design sprint with my PM and engineers. Over a week, we explored the problem in-depth, brainstormed multiple solutions, and focused on three key questions:

How Might We

Based on the sketches and ideas from the workshop, I began prototyping various solutions and worked to align internal teams. We explored four directions:

Using comment panel as a way to leave suggestions

Highlighting the issue area and labelling the issue

Using comment panel with quick access to add suggestions

A suggestion widget to tag issues

An easy way to tag translation issues

Our initial solution aimed to streamline the review process for proofreaders by enhancing their ability to identify and tag errors efficiently. While reviewing translations, proofreaders can quickly label any text as incorrect, tag the specific issue type, and provide additional comments for context.

While testing with 7 customers, we found that although our initial solution reduced the pain of using spreadsheets, it still was not enough. Customers did not just want a slightly better manual workflow. They wanted something much faster and more hands-off.

Reviewing translations is crucial for them, but it has little impact on their actual work. Therefore, they want to minimize the cost of this process as much as possible.

To address this issue collectively, our team spent three days in Warsaw, where we reviewed all the feedback we had gathered. During this time, we organized a mini-hackathon, which provided us with the focused environment needed to develop and introduce an effective solution.

Welcome to the AI-first, human-assist world.

This marks the industry's first-ever AI-driven tool where all translations are automatically reviewed by AI, significantly reducing the time proofreaders spend on manual checks.

Proofreaders now only need to verify the AI's review to ensure it makes sense, streamlining the process and ushering in an AI-first, human-assisted era in translation review.

Moving to an AI-first review flow created measurable business impact and reduced repetitive QA effort for localization teams.

Adoption

29%

Of enterprise teams in Lokalise used the feature.

Retention

19.1%

Monthly retention rate among teams.

Lead generation

804

Marketing Qualified Leads attracted to try the feature.

I wrote a longer article covering the broader AI UX lessons behind this work.

Published in UXCollective · March, 2024

Designing better UX for AI — 8 Tips from 20+ companies

Let's not add AI for the sake of AI. Let's prioritize user needs, not the technology.

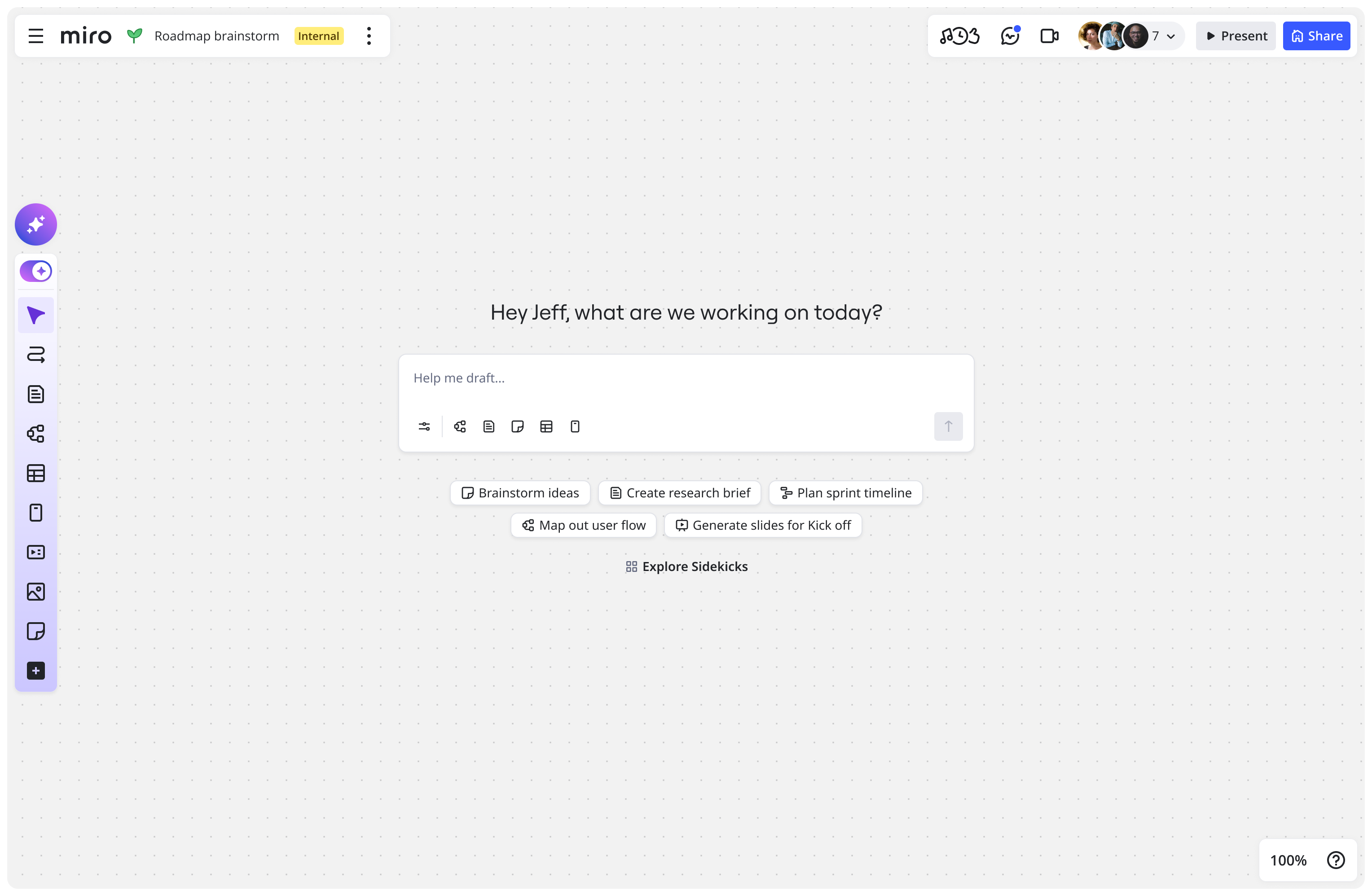

AI-first experience at Miro

A behavior-first approach to AI entry points, momentum, and collaboration workflows.

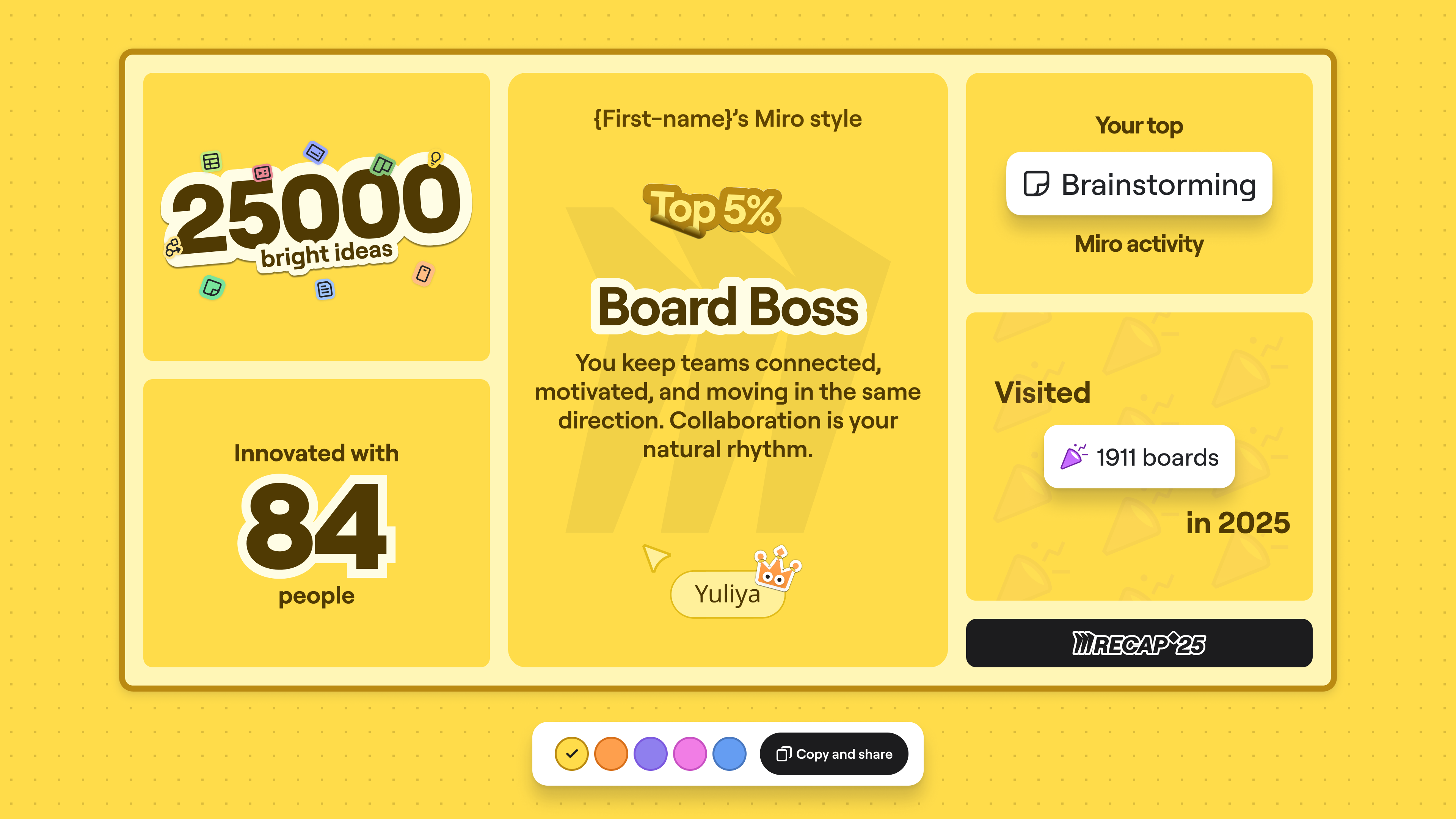

Miro recap at scale

How we built a yearly recap experience that scaled to millions while keeping completion high.

Driving adoption of Miro features

Behavior-based activation experiments that moved passive users into active creators.